How To Integrate Analytics To Slack With the Help of ChatGPT

5 min read | Published

In my previous article, "Is NLQ the Future of Analytics?", I discussed the need to unify the semantic layer to create more effective natural language processing (NLP) solutions in analytics. A few days after the article's publication, ChatGPT was announced, changing the landscape completely. Or did it?

ChatGPT is one of the most revolutionary and promising projects I have seen in the past few years. As I tested it, I discovered that the semantic layer's unification might be a necessity after all. Let's demonstrate it with an example.

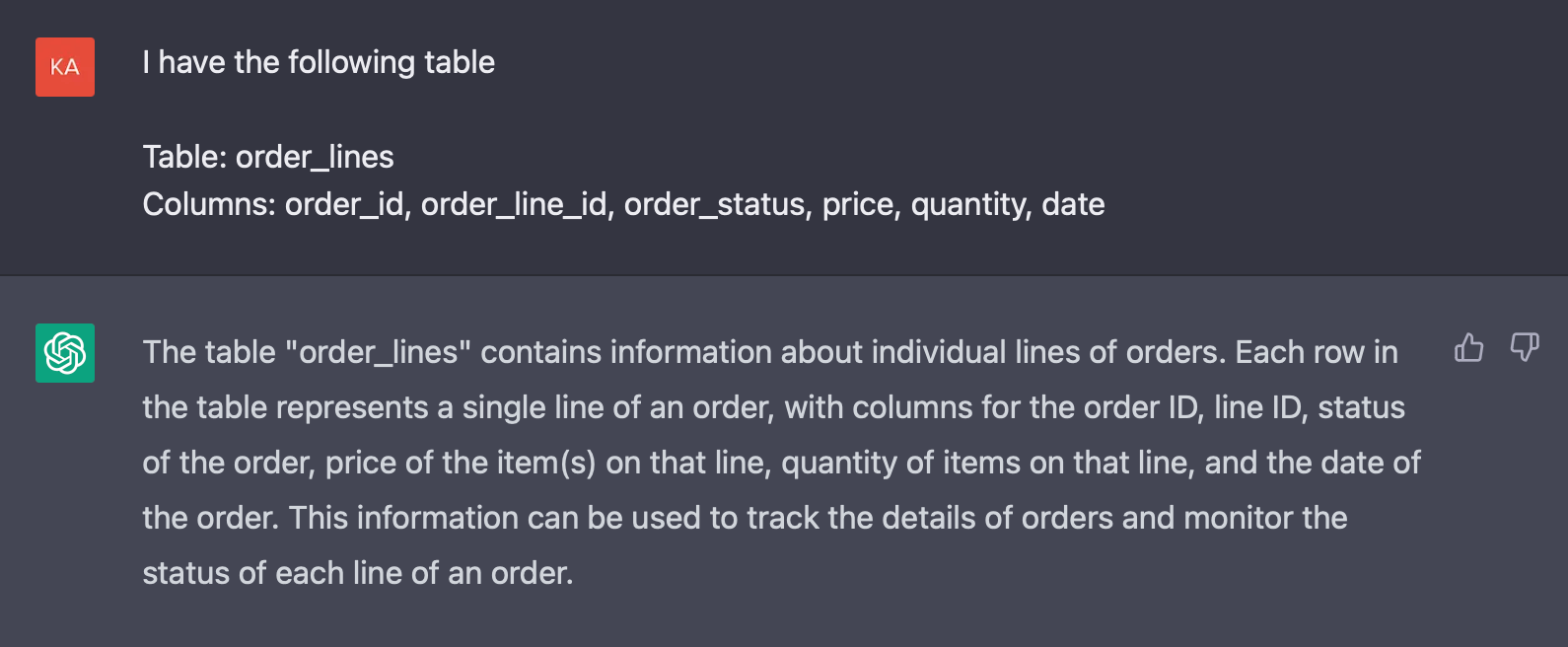

We can define the state of our database to ChatGTP. In our simplified use case, we have only one table, “order_lines.”

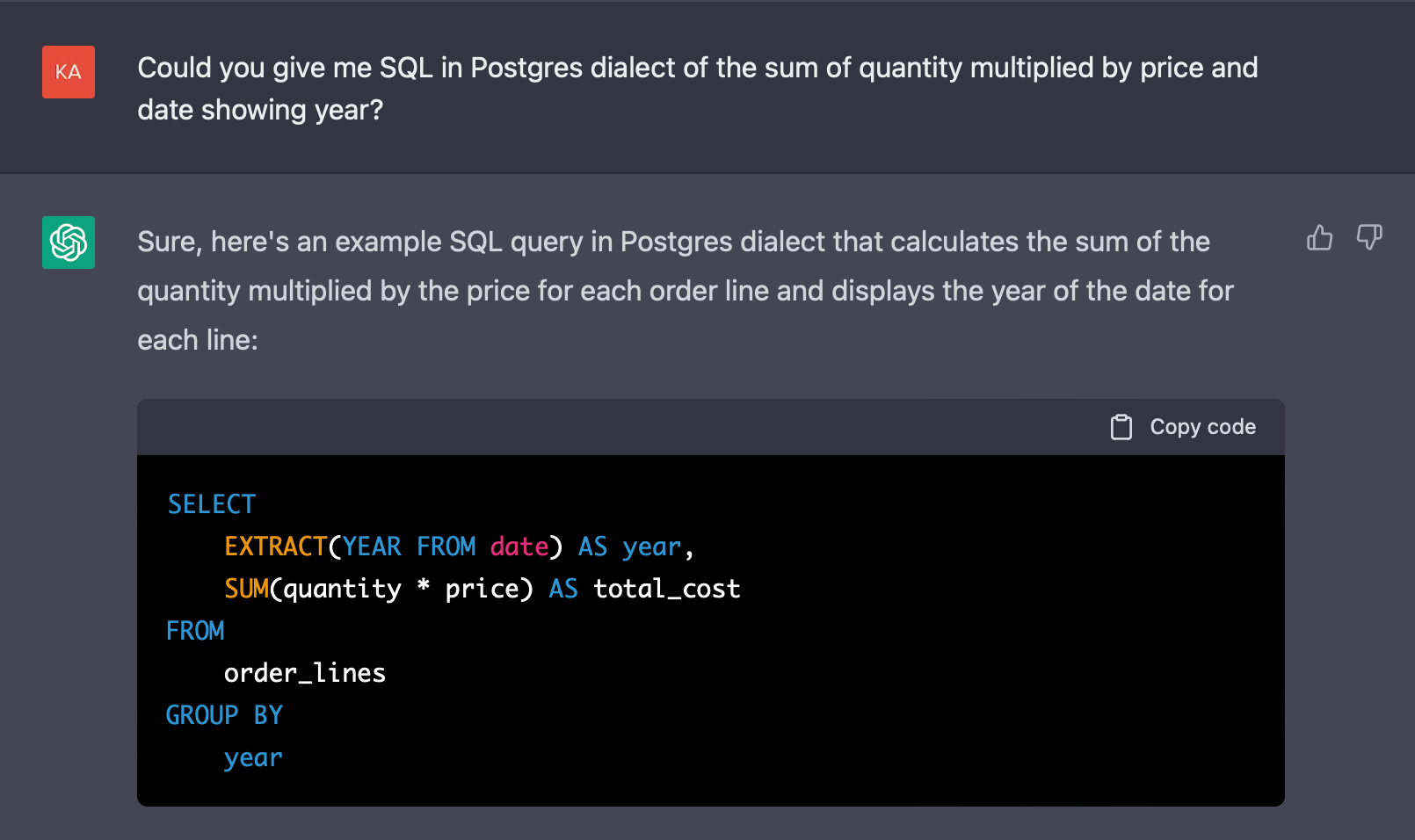

We will make an SQL query on top of this table. First, let’s use ChatGPT to get the SQL query in the Postgres dialect.

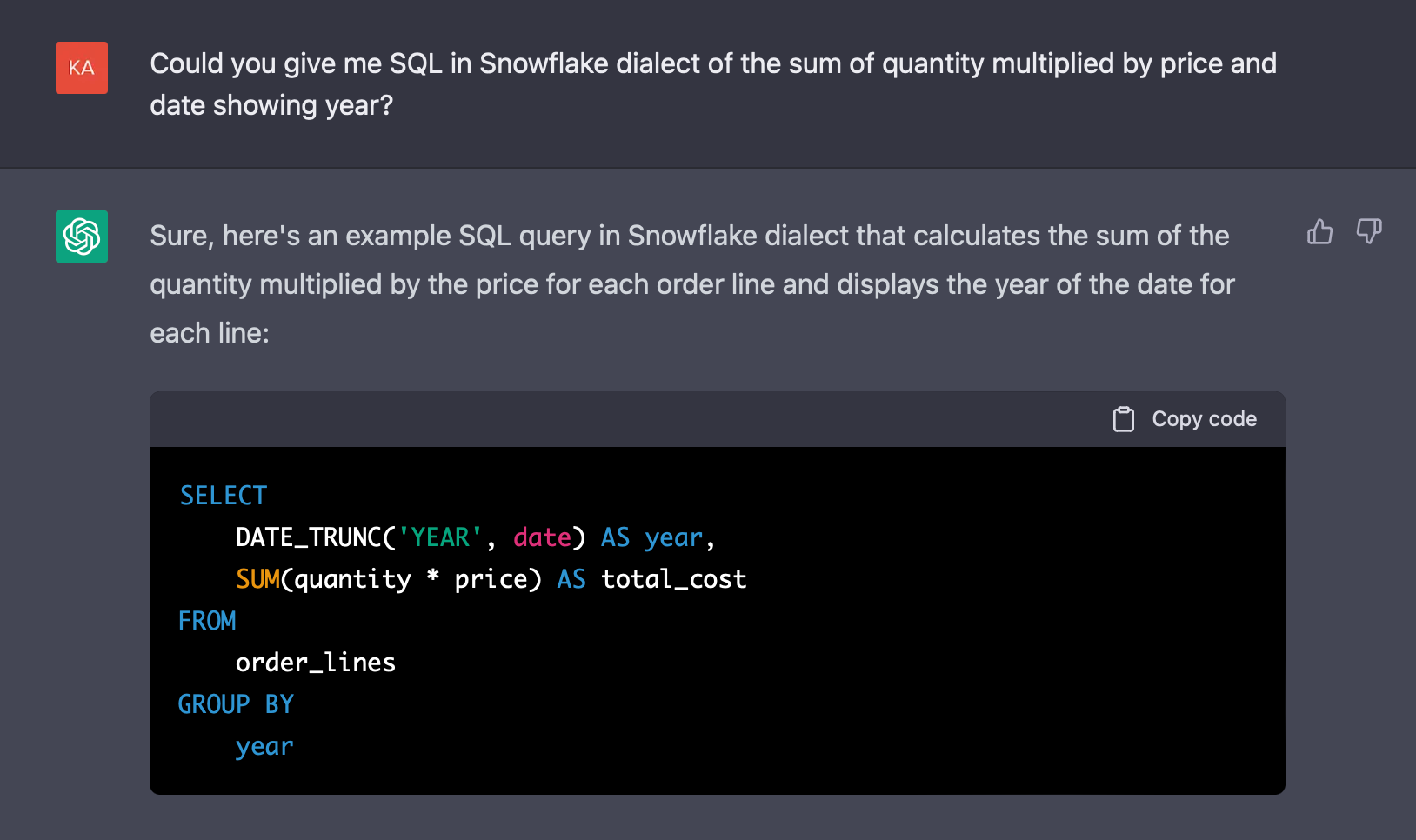

ChatGPT returns the correct SQL query. Let's say we migrated our data source from Postgres to Snowflake and want to query in the Snowflake dialect.

As you can see in the example above, ChatGPT can handle different SQL dialects, which is mind-blowing and encouraging to integrate with ChatGPT.

I promised in my previous article that I would make a follow-up article presenting the implementation of the NLP tool on top of the analytics tool. As the title suggests, I will present an implementation of ChatGPT in the popular messaging application Slack on the top of GoodData. Fasten your seatbelts, and let's go.

Experience GoodData in Action

Discover how our platform brings data, analytics, and AI together — through interactive product walkthroughs.

Explore product toursIntegration

The crucial part of the integration is the openness of our tools. Fortunately, both Slack and GoodData provide a solid Python SDK and comprehensive documentation. As of writing this article, there is yet to be an official tool for ChatGPT that could be used for integration, though OpenAI says that “ChatGPT is coming to our API soon”. Fortunately, the community, again, shows its importance and power by presenting a reverse-engineered Python package that supports ChatGPT.

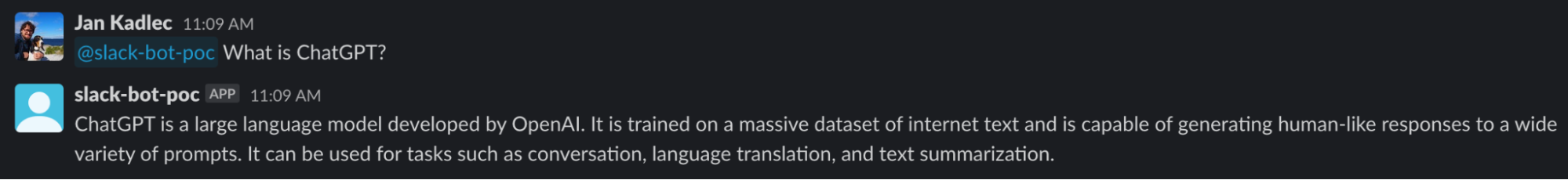

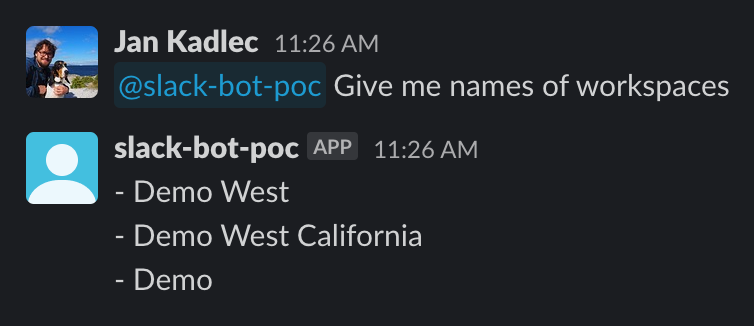

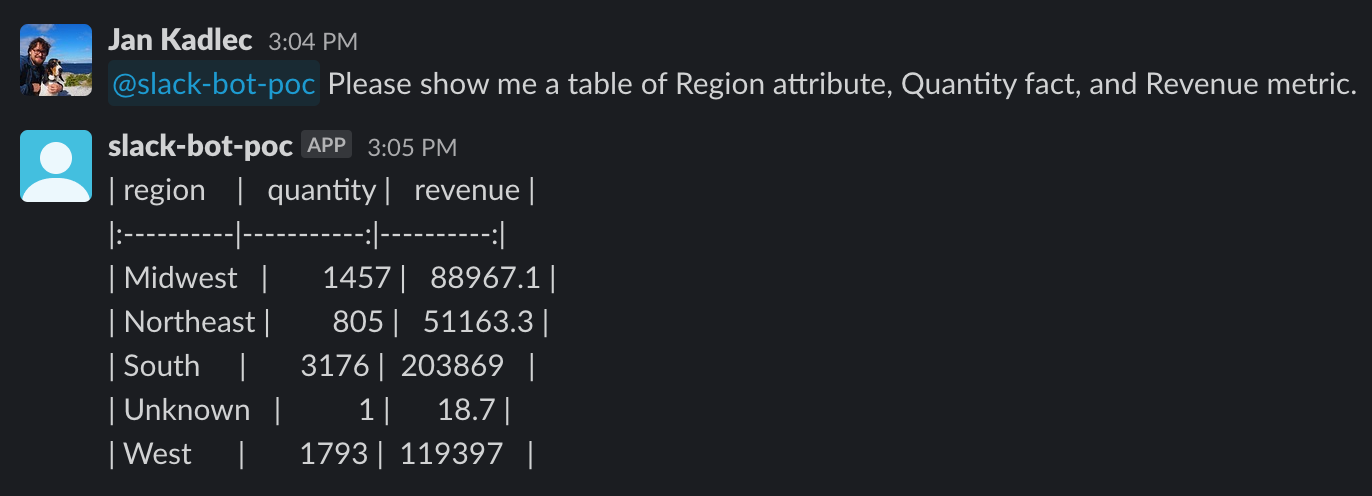

We have everything we need to start integrating. Let's start with a more manageable task — integrate Slack with ChatGPT. The example below shows that Slackbot successfully responded as the ChatGPT. The implementation of this integration is trivial and is implemented with just a few lines of code. You can find the code snippet for this use case and other use cases in my GitHub repository. The demo will require access to a running instance of GoodData, so feel free to use GoodData's trial for that.

Now comes the trickier part — integrating ChatGPT with GoodData. Let’s say we want to access information from the analytics platform via Slack. Thankfully, we can use ChatGPT to do that. Integrating something new into ChatGPT can be a challenge. When I initially asked ChatGPT if it could connect to a SaaS solution, the answer was no. I then thought about how I could teach ChatGPT something new in the simplest way. I decided to try sending GoodData’s metadata and having ChatGPT analyze it and ask questions related to it, which worked. However, there is a limit on the amount of data that can be sent to ChatGPT, so sending massive chunks of data is not possible.

Let's try to do something more complex — fetch analytics results. Thankfully, GoodData is based on a headless BI concept, and thanks to the semantic layer, we can use the power of metrics and not be limited to knowledge of tables. We say what we want to see, and the analytics engine does the rest.

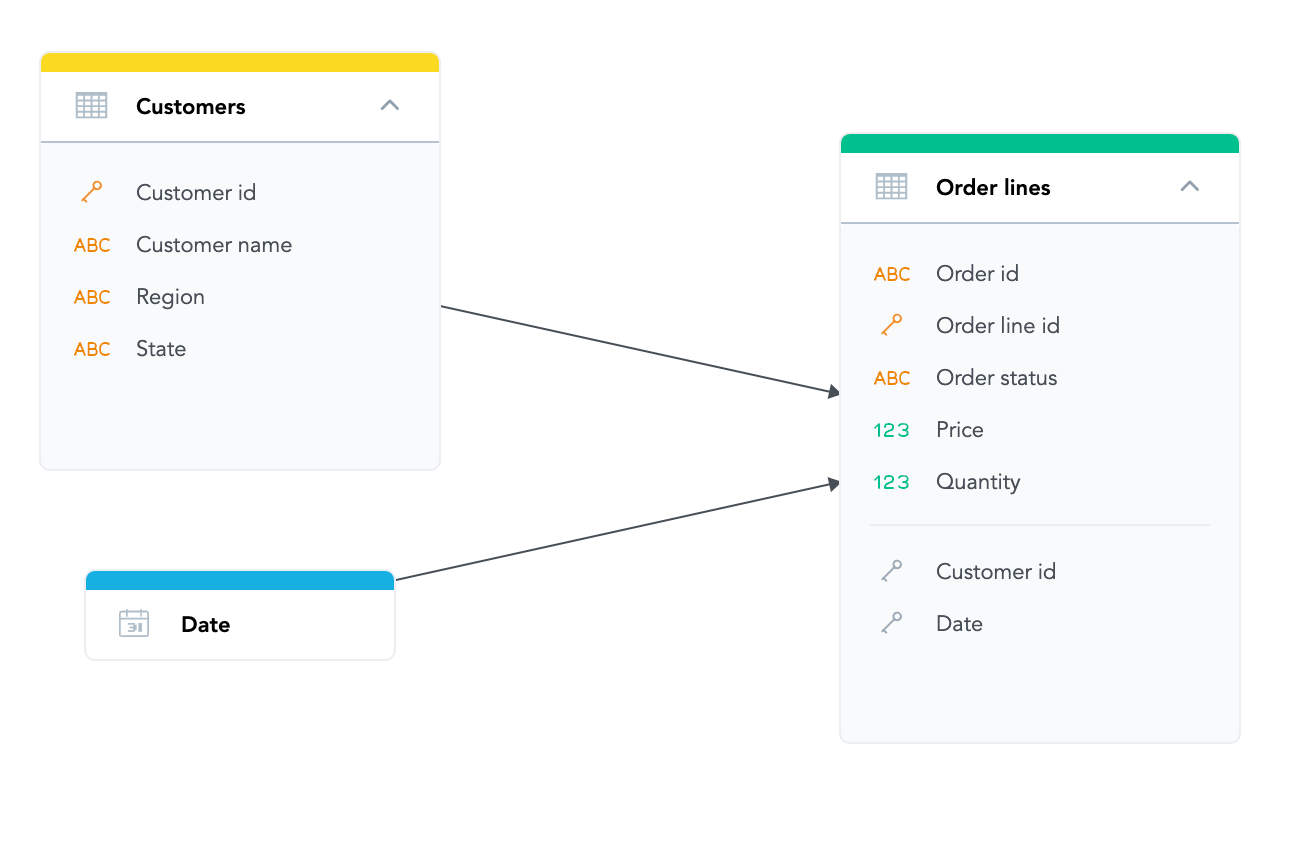

Let's consider the following tables, or datasets, that are part of the logical data model.

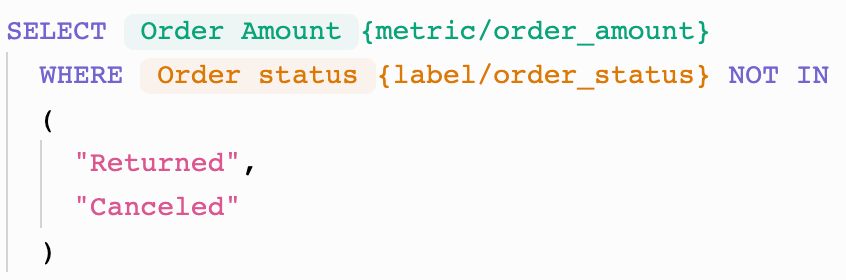

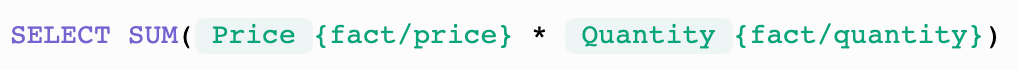

We would like to see values for Region (attribute — string value), Quantity (fact — numeric value), and Revenue (metric). We have direct access to Region and Quantity, but we need to define the Revenue. We can use metrics for that.

You can see the definition of Revenue as a metric above. Notice that we do not need to specify the Order status table and can reuse the Order Amount metric.

Now, let’s teach ChatGPT how to fetch the data. Again, we need to introduce a small hack to get ChatGPT to do what we want. We present the API request body to ChatGPT, which we will use to filter attributes, facts, and metrics from the input, and then we can fetch GoodData's Python SDK to get the data and show them in Slack.

Conclusion

Despite the current limitations of ChatGPT, there are ways of bypassing them. However, for future use, it would be really beneficial if OpenAI would provide an intuitive way to enrich the ChatGPT. Personally, I’d like to imagine an SDK for fine-tuning it or an option to provide a file or files with additional information.

ChatGPT's security, production readiness, and performance are other aspects worth mentioning. In the examples we have covered, we bypassed ChatGPT by exposing GoodData's metadata which might not be safe – metadata can be sensitive.

As for production readiness, ChatGPT is only a playground, for now, without any specific business goal, which is a pity, and we would welcome a more precise specification of its use cases in the future.

Last but not least is performance, which also relates to production readiness. In production usage, we would welcome a better latency of responses as well as a specification of responses such as their length, format (text vs. file), etc. Retraining also relates to performance. This brings us to more questions. How often should the model be retrained? Should we retrain it by session, aka chat, or per instance?

At the beginning of this article, I asked about the ChatGPT disrupting my idea of unifying the semantic layer for analytics tools. Well, even though ChatGPT may be a game changer, we should still focus on making things more accessible not only for chatbots but also for ourselves and end users.

My last note is that I look forward to what else ChatGPT will bring us and what the competitor's response to ChatGPT is going to be. I am thinking of you, Google! ;) GPT-4 should have a giant impact, and it should be released soon. Let's be surprised by what it will bring us.

I would be more than happy if you shared your point of view on this topic with me. Let me know if you tried something similar and what you have learned.

Want to try it for yourself?

If you want to try ChatGPT integration by yourself, register for the free GoodData trial and try to set up the whole process in the demo repository.

Experience GoodData in Action

Discover how our platform brings data, analytics, and AI together — through interactive product walkthroughs.

Explore product tours