White Papers

AI Data Analytics Tools in 2026: Top Platforms Compared

14 min read | Published

About

Summary

With 88% of organizations now using AI analytics tools, speed and automation are no longer enough. Decision-makers require governed business semantics, deterministic logic, transparent results, and enterprise-grade security before AI can influence decisions.

This comparison article reviews the best AI data analytics tools on the market: GoodData, ThoughtSpot, Microsoft Power BI, Tableau, Qlik, Yellowfin BI, Tellius, IBM watsonx / Cognos Analytics, Databricks, H2O.ai, and *Incorta.*

We have evaluated platforms across AI and ML capabilities, semantic control, metric governance, explainability, hallucination prevention, security, extensibility, and enterprise readiness.

Click here to go directly to our comparison table of the leading solutions.

Why Companies Worry About Implementing AI Analytics Solutions

Companies worry about implementing AI analytics tools because many lack governance, explainable logic, and stable metrics. When AI informs financial reporting, customer interactions, or operational decisions in industries such as healthcare and insurance, every answer must be traceable and defensible. Without that foundation, skepticism grows and adoption slows.

Most concerns cluster around five recurring issues:

- Inconsistent metric definitions across teams.

- Black-box outputs that cannot be inspected or validated.

- Silent metric drift over time.

- AI-generated dashboards built without governed logic.

- Increased operational risk when AI analytics is embedded into products and workflows.

Inconsistent Metric Definitions Across Teams

Many AI systems query raw tables or metadata rather than governed business definitions. When revenue, churn, or active customers are defined differently across teams, the same question can produce conflicting answers.

While AI can recognize column names and relationships, it does not automatically understand how the business defines its metrics. Without a centralized semantic layer to standardize calculations and time logic, inconsistencies multiply as usage grows.

Over time, these variations erode trust. Teams begin manually checking results, which slows decision-making and reduces confidence in AI-driven analysis.

Black-Box Outputs That Cannot be Validated

Many AI-powered analytics platforms generate answers without exposing how those answers were derived. In generative AI workflows, users receive results but cannot inspect the queries, joins, filters, or metric logic behind them.

This lack of visibility creates risk, which can be devastating in finance and high-compliance environments. Frameworks such as GDPR, HIPAA, and SOC 2 require traceability when analytics influences reporting or regulated decisions.

If an insight cannot be validated, it cannot be defended; Harvard Business School recently noted that leaders must balance efficiency with 'cognitive engagement,' requiring AI outputs that are transparent and auditable.

Silent Metric Drift Over Time

AI analytics solutions can return different results over time, even when the underlying data remains unchanged. This metric drift often occurs when definitions are not version-controlled or when model behavior evolves.

When the same query produces different answers without a documented change in logic or data, stakeholders question reliability. Even small variations can weaken confidence in forecasts and KPI reporting.

Enterprises expect reproducibility. Stable metrics and controlled logic are essential if AI is to support long-term decision-making.

AI-Generated Dashboards Built Without Governed Logic

AI-driven analytics tools can now create dashboards and charts from simple prompts. This speeds up reporting, but also creates risk when the system guesses how metrics should be calculated rather than using defined business rules.

Dashboards generated by AI tools for data visualization may look clean and professional, but that does not mean the numbers are correct. If joins, filters, or time ranges are applied incorrectly, the results can misrepresent actual performance.

When leaders rely on these dashboards to guide strategy, even small errors in logic can lead to poor decisions.

Increased Operational Risk When Embedded into Products and Workflows

Embedded AI analytics magnifies risk; when analytics is integrated into SaaS platforms or operational systems, outputs influence decisions at scale.

An incorrect metric in an internal dashboard creates confusion. The same issue embedded in pricing engines, underwriting systems, or supply chain automation creates financial and regulatory exposure.

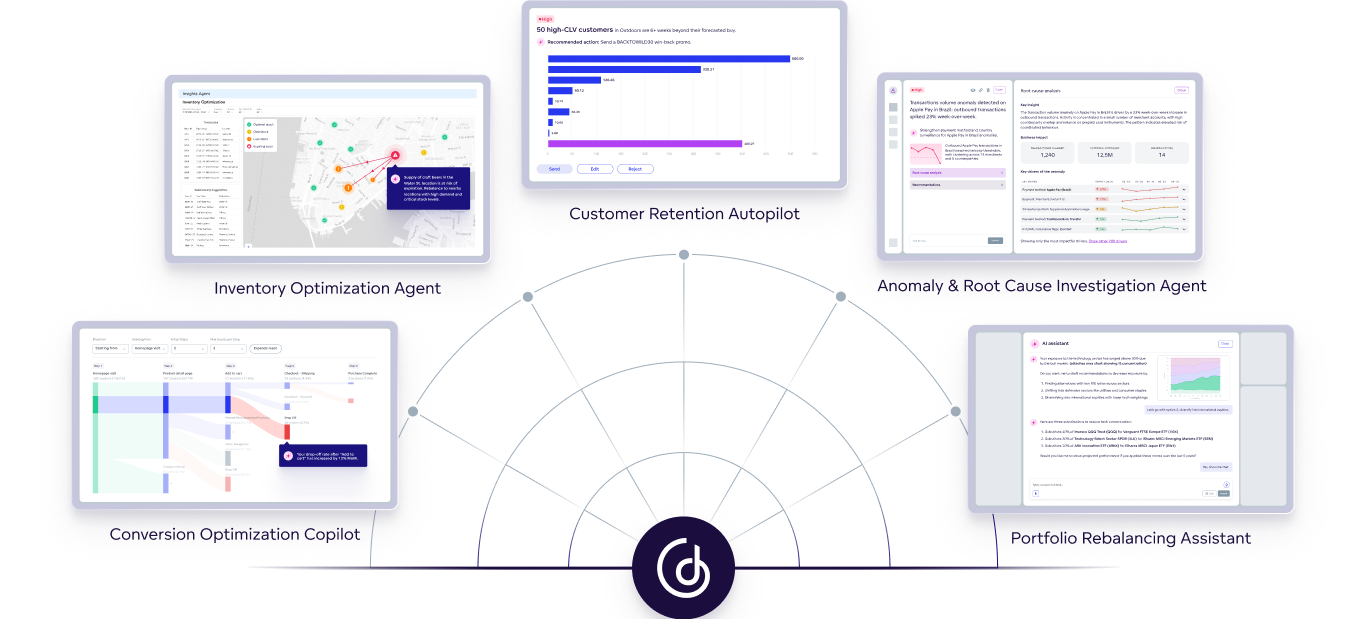

The transition from simple assistants to autonomous actors is moving faster than most governance frameworks can keep up. Gartner forecasts that by 2026, 40% of enterprise applications will feature task-specific AI agents. As these agents begin to make independent decisions, hidden governance gaps rapidly transform into systemic operational risks.

What Companies Demand From AI Analytics Tools in 2026

Companies expect AI analytics tools to function as reliable decision infrastructure rather than experimental features. Early adoption has revealed that speed and automation alone do not justify deployment. Today, AI must be evaluated based on whether it can support financial, operational, and customer-facing decisions without introducing ambiguity or instability.

As a result, expectations have consolidated around three core requirements.

1. Clear Accountability and Governance Standards

Organizations no longer evaluate AI-powered analytics tools based on features alone. They assess whether outputs can be defended, audited, and governed over time.

In any meaningful comparison of AI analytics tools, buyers examine how metric definitions are managed, how access is controlled, and how changes are documented. Governance models, ownership of business logic, and auditability are now central evaluation criteria.

The key question is not how quickly the system responds, but whether the organization can stand behind the answer.

2. Centralized and Governed Business Definitions

AI data analytics tools must operate on structured business definitions rather than raw database schemas. Natural language querying is only reliable when it reflects consistent metric logic across dashboards, APIs, and teams.

A production-ready data analytics AI platform enforces centralized definitions, relationships, and time logic through a governed semantic layer. This ensures that every AI-generated insight aligns with formally approved business rules. When semantic control is built into the architecture, AI scales consistency.

3. Built-In Transparency and Result Stability

Once deployed, AI systems must behave predictably and transparently. Explainable AI analytics requires that answers be traceable to underlying queries and metric calculations, allowing users to inspect how results were produced.

At the same time, organizations need protection against hallucinations and silent metric drift. Trusted AI analytics platforms use guardrails, deterministic query generation, and versioned logic to ensure that the same query produces the same result under the same conditions.

Critical Factors to Consider When Comparing AI Analytics Platforms

A structured comparison of AI analytics platforms should evaluate AI and ML capabilities, semantic control, metric governance, visibility and explainability, hallucination prevention, analytics-as-code extensibility, real-time support, and security and compliance readiness.

These criteria determine whether a platform can deliver reliable, governed, and scalable AI-driven insights.

AI & ML Capabilities

AI and machine learning capabilities shape how effectively a platform can surface insights, automate analysis, and support decision-making.

- Core capabilities typically include:

- Natural Language Query for conversational exploration.

- Automated insights and anomaly detection.

- Predictive analytics and forecasting.

- Generative and agentic AI features for multi-step reasoning.

Note: These features improve accessibility and speed. However, they should be evaluated alongside governance and control. Advanced AI without structured oversight can increase variability rather than clarity.

Semantic Control and Ontology Ownership

Semantic control determines whether AI operates on raw schemas or governed business meaning. Leading platforms enforce a centralized semantic layer that defines metrics, relationships, and time logic consistently across use cases.

Key considerations include:

- A governed semantic layer as a unified business view

- Centralized metric definitions.

- The ability to define or bring your own ontology.

- Data integration across multiple sources and systems.

Note: AI that operates only at the metadata level may interpret tables correctly but lacks enforced business context. Without semantic ownership, maintaining consistency becomes difficult as adoption grows.

Full Control Over Metrics and Business Logic

Metric governance ensures that definitions remain consistent across dashboards, APIs, and AI-generated outputs. Robust analytics solutions centralize business logic rather than distributing calculations across individual reports.

- Evaluation factors include:

- Metric reuse and versioning.

- Consistency across dashboards, APIs, and AI responses.

- Structured change management processes.

Note: When metrics are reusable and version-controlled, AI becomes predictable. Without centralized control, small variations accumulate over time.

Visibility and Validation of Results

Visibility into how answers are generated is essential for responsible AI deployment. Platforms must allow users to inspect queries and trace results back to defined metrics.

Important capabilities include:

- Deterministic versus probabilistic output behavior.

- Guardrails that constrain AI-generated answers.

- Protection against unintended metric changes over time.

Note: Strong platforms ensure that the same query produces consistent results under the same conditions. Stability is a system property, not a user responsibility.

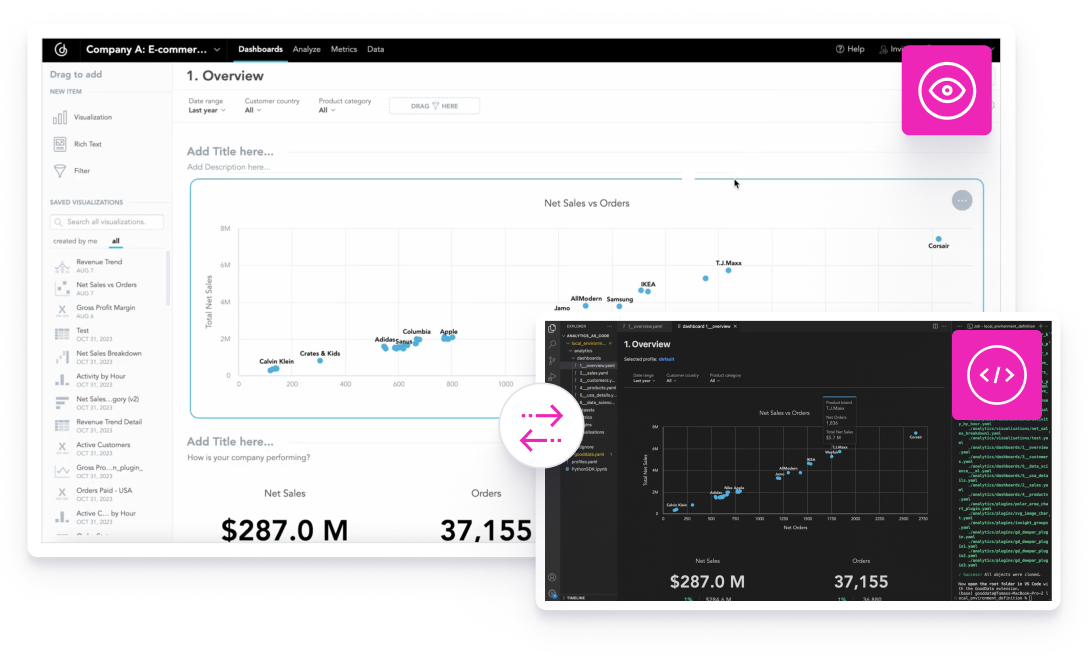

Analytics as Code and AI Extensibility (MCP-Ready)

Modern analytics platforms increasingly support analytics-as-code, allowing teams to define metrics and logic programmatically. This approach strengthens governance and improves collaboration between data and engineering teams.

Evaluation factors include:

- Programmatic metric and model definitions.

- Version control for analytics logic.

- CI/CD support for analytics changes.

- Open APIs and structured integration interfaces.

- Support for MCP Server and external AI agents.

Note: When analytics is programmable and extensible, AI can integrate into broader automation strategies without compromising control or consistency.

Security, Compliance, and Enterprise Readiness

Security and deployment flexibility remain foundational requirements. A production-ready platform must enforce consistent access controls and compliance safeguards across both traditional analytics and AI-generated outputs.

Core capabilities include:

- Row-level and column-level security.

- Full auditability of queries and changes.

- Cloud-native architecture with support for embedded and hybrid deployments.

Note: Organizations operating under GDPR, HIPAA, SOC 2, or similar frameworks must ensure that AI-driven insights inherit the same protections as standard reporting. AI does not replace governance. It must operate within it.

Comparison Table of Top AI Data Analytics Platforms on the Market

Top AI Analytics Platforms Reviewed

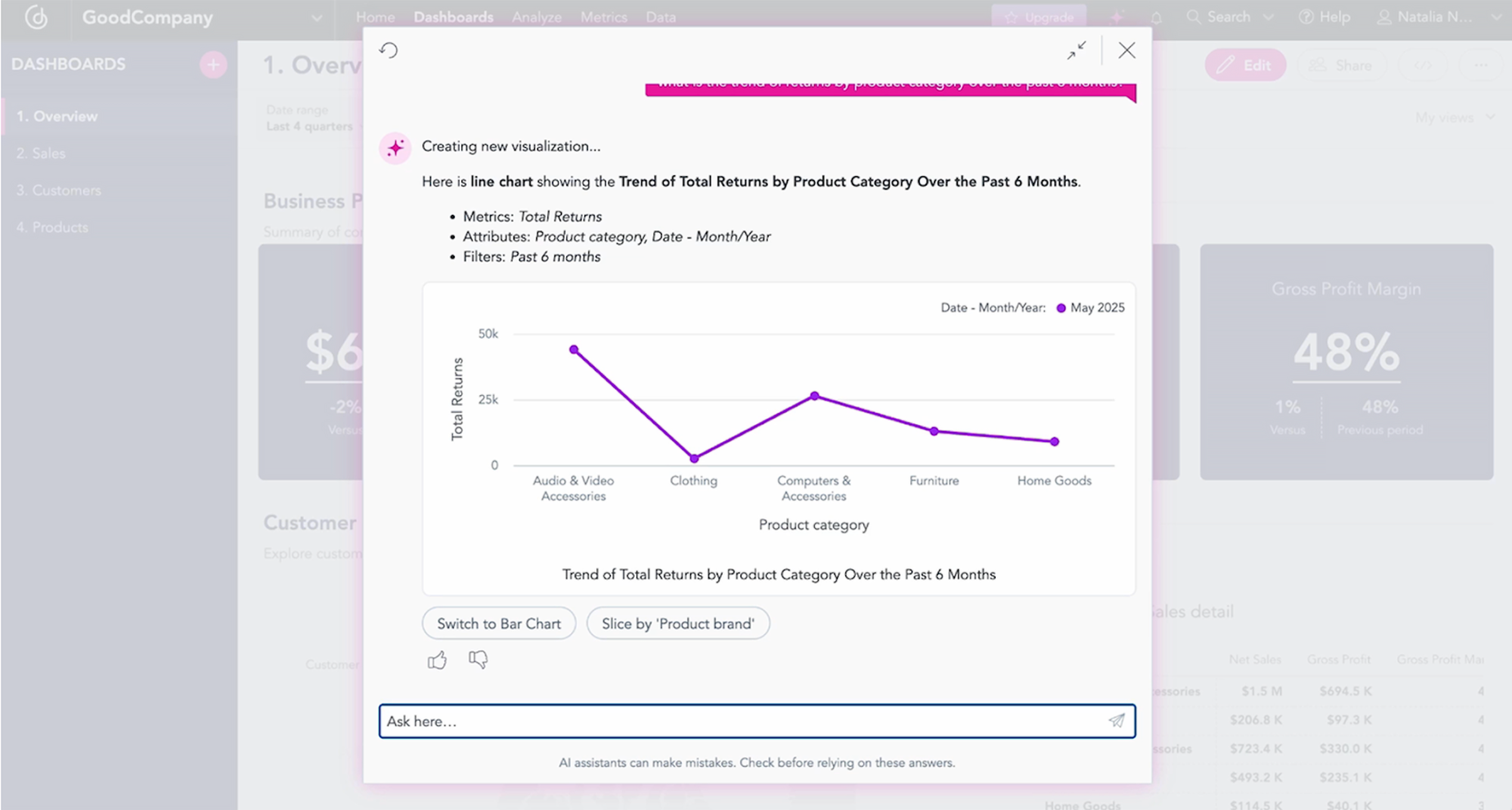

GoodData

Best for: Enterprises that need explainable AI analytics, governed metrics, and embedded analytics in products.

Key AI Features:

- Governed, trustworthy AI analytics built on a first-class semantic/intelligence layer that explicitly defines business logic, metrics, and relationships.

- AI Assistant designed to work with governance and semantic layer orchestration (not just raw querying).

- Deterministic, reproducible answers that can be traced back to inspectable queries and metric definitions.

- Embedded-first AI experiences, enabling controlled AI insights directly inside applications and workflows.

Strong fit for “answers you can prove”, with an emphasis on governance and control.

Cons

Requires upfront semantic modeling discipline: the tradeoff for higher trust and long-term consistency.

An embeddable approach supports controlled AI experiences within workflows and products.

Cons

Enterprise-grade AI embedded analytics.

Cons

ThoughtSpot

Best for: Business teams who want self-service AI search over analytics with automated insight surfacing.

Key AI features:

- Search-driven natural language analytics (NLQ) for asking questions and exploring data without SQL or dashboard navigation.

- SpotIQ augmented analytics using ML and generative AI to automatically surface insights, trends, and outliers.

- Automated insight enhancements, including anomaly detection, change analysis, and basic forecasting.

Strong “time-to-answer” with NLQ + automated insight discovery.

Cons

Explainability and trust depend heavily on the quality of the setup: without strong governance, AI-generated answers can reflect inconsistent metric definitions.

Good for scaling analytics to non-technical users (search-first UX).

Cons

Metrics are more tightly coupled to dashboards and worksheets: lacks a single, reusable semantic layer, making it harder to consolidate and enforce consistent business definitions across the entire environment.

Automates repetitive tasks (models, dashboards, embedding).

Cons

Fundamentally search-based interaction model: users must adopt question-driven workflows, which may not suit all analytical or operational use cases.

Microsoft Power BI

Best for: Organizations that are standardized on Microsoft/Fabric and want AI-assisted reporting and exploration.

Key AI features:

- Natural language-driven report and visual generation, enabling users to describe questions and have dashboards or visuals created automatically.

- AI-assisted data exploration and summarization, tightly integrated with Fabric services such as OneLake, semantic models, and Azure AI.

Strong ecosystem integration: Seamless alignment with Fabric, Azure AI, Microsoft 365, and enterprise identity/security.

Cons

Explainability and validation can become fragmented at scale: business logic is often distributed across multiple datasets and reports, making it harder to ensure consistent, provable AI-generated answers.

Deep Copilot integration.

Cons

Semantic governance varies widely by implementation: while Fabric semantic models exist, many enterprises still operate with duplicated or report-level logic, which limits AI trust.

Cons

Copilot quality depends heavily on data modeling and governance discipline: AI-assisted insights are only as reliable as the underlying Fabric and Power BI semantic setup.

*See how Power BI stacks up against GoodData in this comparison guide.

Tableau

Best for: Teams that want strong visualization plus an AI assistant to accelerate analysis and authoring.

Key AI features:

- Tableau Pulse provides AI-powered metric monitoring, summaries, and proactive insights.

- Ask Data / Explain Data for natural language querying and automated explanations of trends and outliers.

- Einstein / Tableau AI integration brings generative AI–based explanations, summaries, and recommendations.

- AI-assisted insight delivery embedded into Tableau dashboards and Salesforce workflows.

Familiar BI-first experience with AI augmentation: AI enhances existing dashboards and workflows without requiring users to change how they work.

Cons

AI operates primarily at the dashboard and metadata level: business logic is often embedded in workbooks rather than a centralized semantic layer, limiting deterministic control.

Strong narrative and explanation features: useful for summarizing trends and communicating insights to business users.

Cons

Metric governance can be fragmented: definitions may vary across dashboards, making it harder to guarantee consistent AI-generated answers.

Deep Salesforce ecosystem integration: especially valuable for CRM-centric analytics and operational use cases.

Cons

Explainability is limited for AI-generated insights: users often see what changed, but not always why in a fully inspectable, reproducible way.

Pulse provides opinionated, metric-centric monitoring: good for pushing insights proactively rather than relying on ad-hoc exploration.

Cons

Less suited for strict embedded or regulated use cases: enforcing consistent business meaning across dashboards, APIs, and AI can be challenging at scale.

*See how Tableau stacks up against GoodData in this comparison guide.

Qlik

Best for: Organizations that want AI-augmented exploration across varied datasets and dashboard experiences.

Key AI features:

- Insight Advisor evolution includes conversational analytics (Insight Advisor Chat) and LLM-driven language generation.

- Advanced analysis types include forecasting, key driver analysis, plus Qlik AutoML for predictive analytics.

- Natural language insights directly on dashboards (narrative/NLG object).

Strong automated insight discovery: the associative engine can surface relationships and outliers that may be missed in manual analysis.

Cons

Business logic is often embedded at the app level: metrics and definitions can be tightly coupled to Qlik apps, limiting reuse and global consistency.

Good support for exploratory analytics: encourages discovery across dimensions without predefined query paths.

Cons

Explainability depends on user expertise: AI-generated insights may not always be easily traceable or reproducible across contexts.

Mature augmented analytics features: Insight Advisor and AutoML support guided analysis and forecasting scenarios.

Cons

Less deterministic metric behavior: associative exploration can produce different answers depending on user selections and context.

Works well for analyst-driven exploration: particularly effective for complex datasets and ad-hoc investigation.

Cons

Steeper learning curve for non-technical users: especially compared to search-first or chat-based AI analytics tools.

*See how Qlik stacks up against GoodData in this comparison guide.

Yellowfin BI

Best for: Organizations and SaaS vendors that want embedded analytics with automated insights and data storytelling, without heavy AI experimentation or open agent-based architectures.

Key AI features:

- Yellowfin Signals for automated detection of trends, anomalies, and changes in key metrics.

- Assisted insights and narrative explanations, focusing on “what changed” and “why it matters.”

- Natural language–style interaction for explaining insights, rather than full conversational NLQ.

- AI-driven alerts and notifications, proactively pushing insights to users.

Strong automated insight surfacing: Signals effectively monitor KPIs and identify changes without manual exploration.

Cons

Limited conversational AI and NLQ depth: less flexible than search-first or chat-based AI analytics platforms.

Good storytelling and narrative delivery: helps business users understand insights in context, not just view charts.

Cons

AI operates primarily at the insight layer: less emphasis on a centralized, reusable semantic layer governing all metrics.

Cons

Metric governance and reuse can be constrained: definitions may be tied to reports or Signals rather than a universal ontology.

Cons

Less suitable for complex analytical exploration: focused more on monitoring and explanation than deep, ad-hoc analysis.

Cons

Limited AI extensibility: external LLMs and agents cannot interact with Yellowfin analytics via MCP.

Tellius

Best for: Teams that want AI to surface root causes/key drivers and move from dashboards to explanations.

Key AI features:

- Tellius positions itself around agentic analytics/AI agents and augmented analytics.

- Automated root cause, key driver, and anomaly detection under “AI Insights.”

- Conversational analytics aim to turn conversations into outcomes/workflows.

Designed around asking questions and receiving insights, not building dashboards.

Cons

Poor data quality reduces agentic accuracy and insight relevance.

Agents collaborate (plan, prep, visualize, etc.) rather than simply providing chat responses.

Cons

Unstructured or text queries are not a stated strong point at this stage.

Semantic knowledge layer aims to reduce hallucinations and provide trustworthy results.

Cons

Users can create or tailor AI agents without coding.

Cons

IBM watsonx / Cognos Analytics

Best for: Large enterprises that need established, governed BI and reporting with AI-assisted exploration (particularly in regulated or compliance-heavy environments).

Key AI features:

- AI Assistant for Cognos Analytics supporting natural language input to create visualizations, dashboards, and narrative explanations.

- AI-assisted data exploration and preparation, aimed at simplifying analysis for business users.

- Narrative insights and automated explanations to describe trends, drivers, and changes in metrics.

- Integration with IBM watsonx for broader AI and governance capabilities within the IBM ecosystem.

Reliable for operational and compliance-focused use cases.

Cons

Compared to newer AI-native or semantic-first platforms, setup and workflows can feel more complex.

Well-suited for controlled reporting, auditability, and regulated environments.

Cons

AI experiences are layered onto traditional BI: less fluid, conversational, or agent-like than modern AI-first analytics platforms

Helps reduce manual effort for common analytical workflows.

Cons

Limited semantic-first AI abstraction: business logic is often embedded in models and reports rather than exposed as a reusable, AI-native semantic layer.

Databricks

Best for: Data engineering and data science teams seeking natural language analytics on the lakehouse with scalable AI capabilities.

Key AI features:

- AI/BI Genie (GA) enables natural language questions that generate insights. It can be paired with dashboards through a companion Genie space.

- “Deep Research” capabilities with structured plans, hypotheses, and cited conclusions.

- Genie spaces can be configured with datasets, sample queries, and usage guidelines. Performance can be monitored and refined through feedback.

A unified analytics and AI platform: Analytics, ML, and AI operate on the same data foundation without duplication.

Cons

Less accessible for non-technical business users: compared to BI tools with strong UX-led self-service and storytelling. Databricks is mainly an AI data platform for developers and data analysts.

Good foundation for agent-style analytical workflows, especially when combined with notebooks, ML pipelines, and orchestration.

Cons

H2O.ai

Best for: Data science and analytics teams prioritizing predictive modeling/explainability over dashboards/interactive BI.

Key AI features:

- End-to-end ML workflow support: from data preparation to deployment and monitoring.

- Strong focus on auditable models: particularly for regulated and high-stakes use cases.

Trusted in regulated industries: commonly used where explainability and compliance are mandatory.

Cons

Typically complements BI tools rather than replacing them: predictions are often surfaced via downstream BI or applications.

Mature ML tooling: well-suited for forecasting, classification, risk scoring, and optimization use cases.

Cons

No semantic-first business metric layer: focuses on ML features and targets, rather than governed, reusable business metrics.

Incorta

Best for: Organizations that want faster time-to-insight on detailed/live data and an AI copilot for building insights.

Key AI features:

- Real-Time Unified Data Foundation: Incorta provides a data platform that connects directly to operational source systems and delivers real-time, raw-data access without ETL, so analytics and AI are always based on the freshest available data.

- AI-Powered Querying: users can interact with data using AI-driven exploration or natural language to get insights without deep SQL or BI expertise.

- Incorta’s emerging Nexus suite enables AI workflows that connect data insights with actions and business process triggers across finance, operations, and supply chain.

- Nexus AIDA provides native agents built for specific business domains (e.g., planning, procurement) that use real-time data to deliver context-rich, automated decisions.

- Nexus Marketplace for Agents: partners and developers can build, share, and monetize custom agents through a marketplace, extending agentic capabilities.

Supports specific domain agents (Nexus AIDA) that can automate routine tasks and decisions.

Cons

“Answers you can prove” depends on governance and semantic rigor during implementation.

Integrates AI, analytics, and workflow together, not as separate tools

Cons

Incorta is yet to market a rich ecosystem of distinct agent types (e.g., modeling, visualization, embedding).

Cons

Because the agentic vision is broad, practical use cases vary widely between organizations.

Why GoodData Is the Best Enterprise AI Analytics Platform in 2026

GoodData stands out as the best AI analytics platform for organizations that need advanced AI capabilities without sacrificing governance, explainability, or control.

While many platforms emphasize conversational speed or automation alone, GoodData combines natural language querying, predictive and agentic AI features, and real-time analytics with deterministic logic, semantic governance, and enterprise-grade security.

The result is trusted AI analytics that delivers both intelligence and accountability.

AI Analytics Built on Proven Business Semantics

GoodData is an AI-native analytics platform built on a first-class semantic layer. Instead of allowing AI to interpret raw schemas, GoodData encodes business logic, metric definitions, relationships, and time intelligence directly into its architecture.

This ensures that dashboards, APIs, and AI-generated responses all reference the same centralized definitions. As usage scales across teams or customer-facing applications, meaning remains consistent and governed.

By embedding business semantics into the core platform, GoodData enables AI to operate within defined context rather than inferring intent from column names or metadata.

This foundation supports both internal analytics teams and AI-driven products. For example, Zeals, a company that builds chat-based ecommerce experiences, uses GoodData to simplify the development and management of analytics within its platform.

Instead of manually building and maintaining metric logic on the front end, Zeals relies on GoodData’s semantic layer to manage analytics as code within a centralized system. As the Zeals team explains:

Secure and Governed AI Analytics for Enterprises

GoodData is a secure AI analytics platform designed for enterprise-grade deployment. Governance, access control, and auditability are integrated directly into the analytics layer rather than layered on afterward.

The platform supports row-level and column-level security, full query auditability, and cloud-native architectures suitable for embedded, hybrid, and multi-tenant environments. AI-generated insights inherit the same compliance safeguards required under frameworks such as GDPR, HIPAA, and SOC 2.

By combining advanced AI capabilities with semantic control and security, GoodData enables organizations to scale trusted AI analytics across teams, products, and workflows.

Want to see how GoodData delivers advanced AI with governed semantics, explainable results, and enterprise-grade security — without sacrificing speed? Request a demo today.

FAQs About AI Data Visualization and AI Analytics Platforms

What are the best AI-powered analytics platforms available today?

Top AI data analytics tools include GoodData, ThoughtSpot, Microsoft Power BI, Tableau, Qlik, Yellowfin BI, Tellius, IBM watsonx / Cognos Analytics, Databricks, H2O.ai, and Incorta. These platforms offer varying strengths, from governed semantic layers and embedded analytics to search-driven insights and predictive modeling.

How accurate are AI analytics tools compared to traditional dashboards?

Modern AI tools for data analytics can be as accurate as traditional dashboards when built on governed metric definitions and controlled data processing pipelines. Accuracy depends primarily on the semantic layer and data quality. Without centralized definitions and validation controls, AI-generated results can vary across teams or over time.

Can AI analytics platforms explain how they generate insights?

Modern AI analytics platforms can explain how insights are generated by exposing query logic, metric lineage, and calculation rules. Explainable AI analytics allows users to inspect joins, filters, and time definitions. Platforms that lack visibility operate as black boxes, which limits trust and auditability.

What risks should companies watch for when using generative AI in analytics?

Generative AI in analytics introduces risks such as hallucinated metrics, inconsistent business definitions, and non-deterministic outputs. Without architectural guardrails, AI-driven analytics tools may reinterpret logic or produce shifting results. Companies should prioritize platforms that enforce governed semantics and protect against metric drift.

How do AI analytics tools handle changing business metrics over time?

Strong AI analytics tools support versioned metric definitions and centralized change management. When business rules evolve, updates are documented and consistently applied across dashboards, APIs, and AI outputs. Platforms without structured governance risk silent inconsistencies and unstable reporting.

Do AI-powered analytics platforms work with real-time or streaming data?

Many AI-powered analytics platforms support real-time or near-real-time data processing through cloud-native analytics architectures. The most scalable solutions integrate directly with modern data warehouses and streaming pipelines, enabling real-time dashboards, monitoring, and AI-driven decision support.

How can companies evaluate AI analytics platforms before committing to one?

Companies should evaluate AI analytics software based on semantic governance, explainability, scalability, security, and integration flexibility. Any structured AI analytics platforms comparison should include hands-on testing with real business metrics, not just feature demonstrations. Pilot implementations often reveal long-term stability and trustworthiness.

Does GoodData look like the better fit?

Get a demo now and see for yourself. It’s commitment-free.