Why You Should Create Your Next Analytics Project in Code

Everything as Code

In the mid-2000s AWS introduced cloud computing services. It was a true disruption that changed the way DevOps operate but also brought its own challenges. How do you manage a complex infrastructure in a reliable, reproducible, and simple way?

Well, you apply the same principles that software engineers were perfecting for decades. You put it in code, version it, and automate it. Thus, Infrastructure as Code was born.

Soon, more and more aspects of the modern tech company became “codified”. We’ve seen DevOps Pipelines as Code, Security as Code, and finally, Analytics as Code. A few years ago an umbrella term emerged: Everything as Code. In a nutshell, it suggests that any process, be it business or technical, can be defined as code. And benefit from it.

Analytics as Code

There are some good reasons to use software engineering workflow for analytics:

- The code is easy to version. Imagine having every single iteration of your solution securely stored and revertible. Imagine having a note to every change explaining why it was done, when, and by whom.

- The code is easy to collaborate on. There are platforms dedicated to collaborative coding, like GitHub or GitLab, with features like Pull Requests and Code Reviews.

- Last but not least, it is easy to automate a solution or process defined “as code”. And it is not only about deployment but also quality control — test automation is a must-have in any modern software solution.

The code approach in analytics is not new. After all, SQL is code and it’s the oldest tool in the box. However, it matters to what extent you apply software engineering workflow. It is one thing to write SQL in the PowerBI web interface and another thing entirely to create a dbt transformation, commit it to the Git repository, ask your colleague to review it, and run automation scripts that will test and deploy the new version to production.

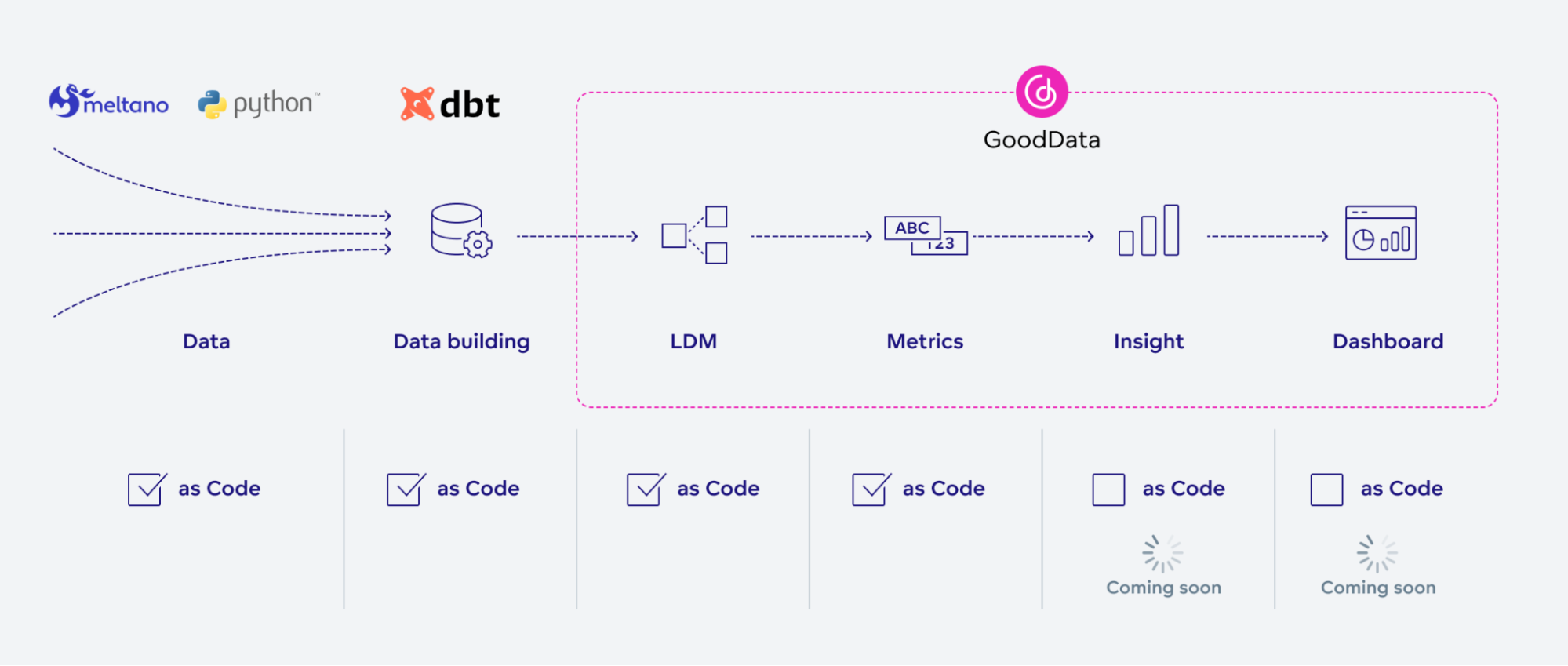

Let’s have a look at a typical analytics solution. Your data pipeline usually starts with data extraction and load. Unless you have a very simple use case, you probably have custom scripts to extract and clean the data and place it in storage. Then, you’ll have a data transformation step to create an analytics-friendly database structure for a specific use case. Dbt and similar tools are becoming more and more popular, particularly because of the testability and repeatability of the transformation result, and the “as code” approach.

Then, we have layers that define your analytics: a semantic model (for dimensional analytics tools), metrics, KPIs, insights, dashboards, etc. This is where the “as code” approach is relatively new and not well adopted as of yet. At GoodData, we’ve supported the “as code” approach for a while now with our Declarative API, focusing on deployment and automation. Now we have released our VS Code extension that brings software development best practices to analytics.

Why not try our 30-day free trial?

Fully managed, API-first analytics platform. Get instant access — no installation or credit card required.

Get startedThe New Workflow

So, how would your day-to-day workflow change with Analytics as Code?

Here is a typical example:

In the morning you get your fresh cup of coffee, open VS Code with the GoodData extension installed, and start working on a new feature.

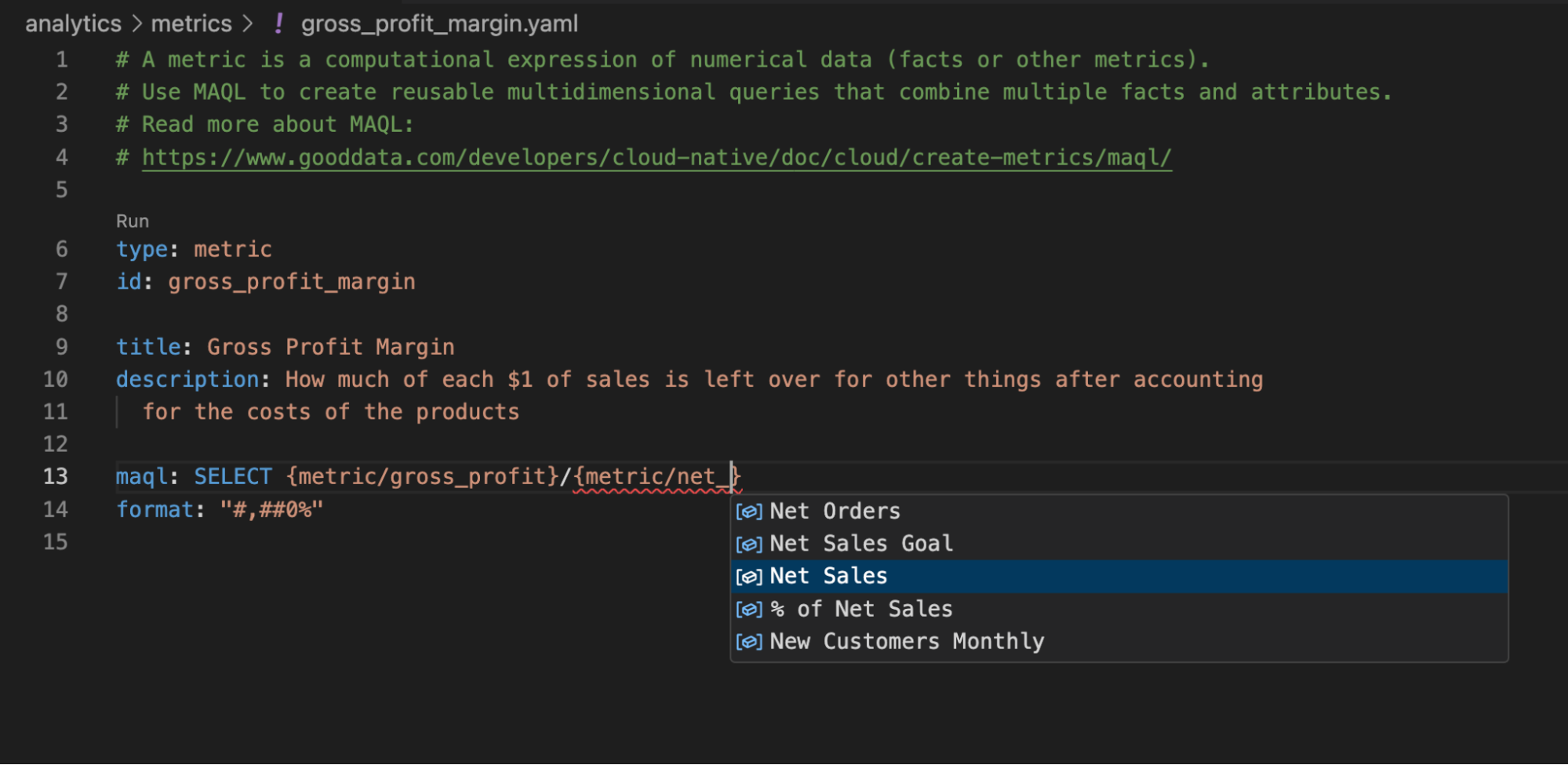

You develop a new metric or adjust your semantic model and benefit from autocomplete and real-time validation in the VS Code interface.

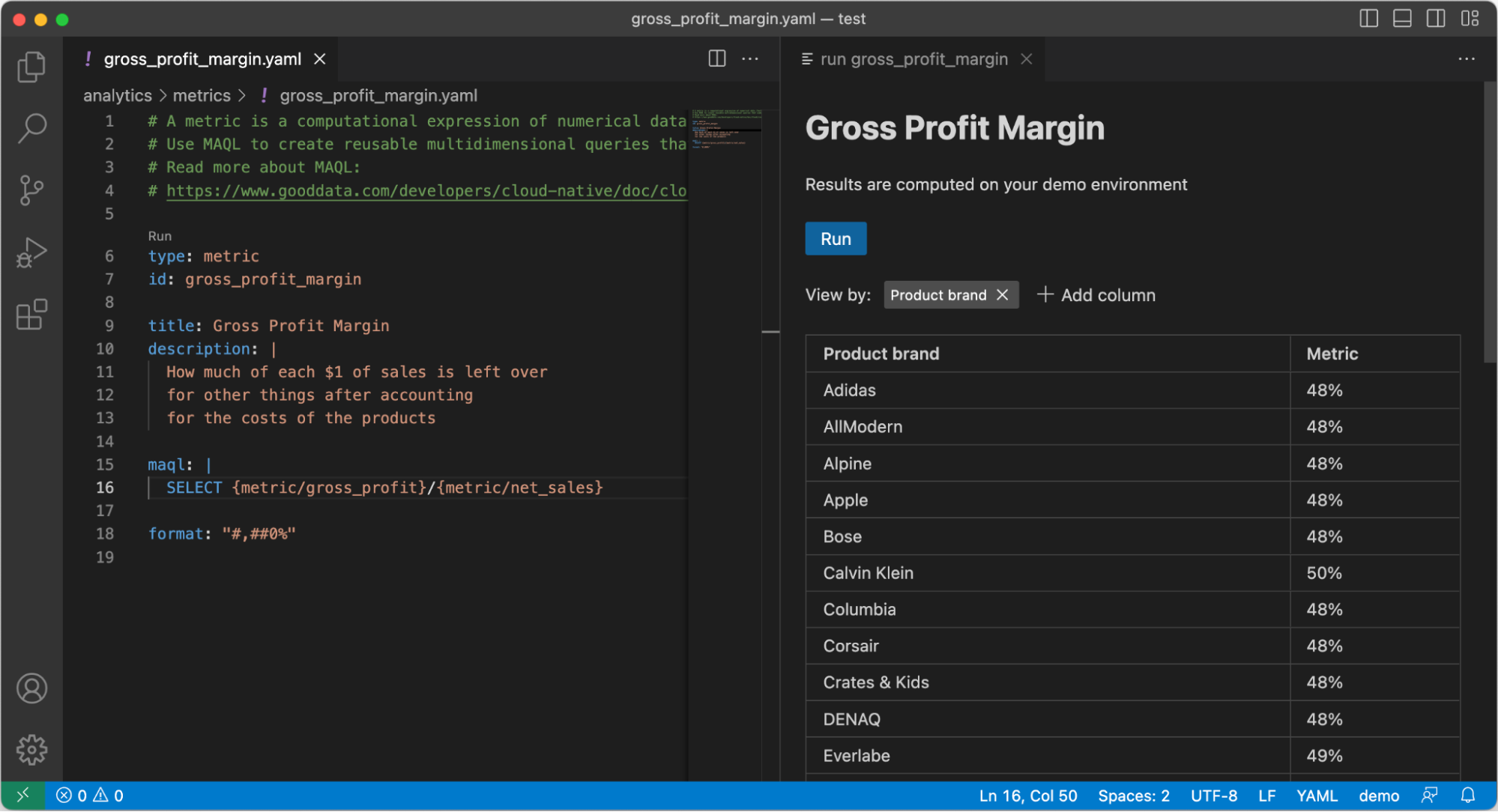

VS Code extension autocomplete Occasionally, you run a preview — right in VS Code — to see how the result would look like.

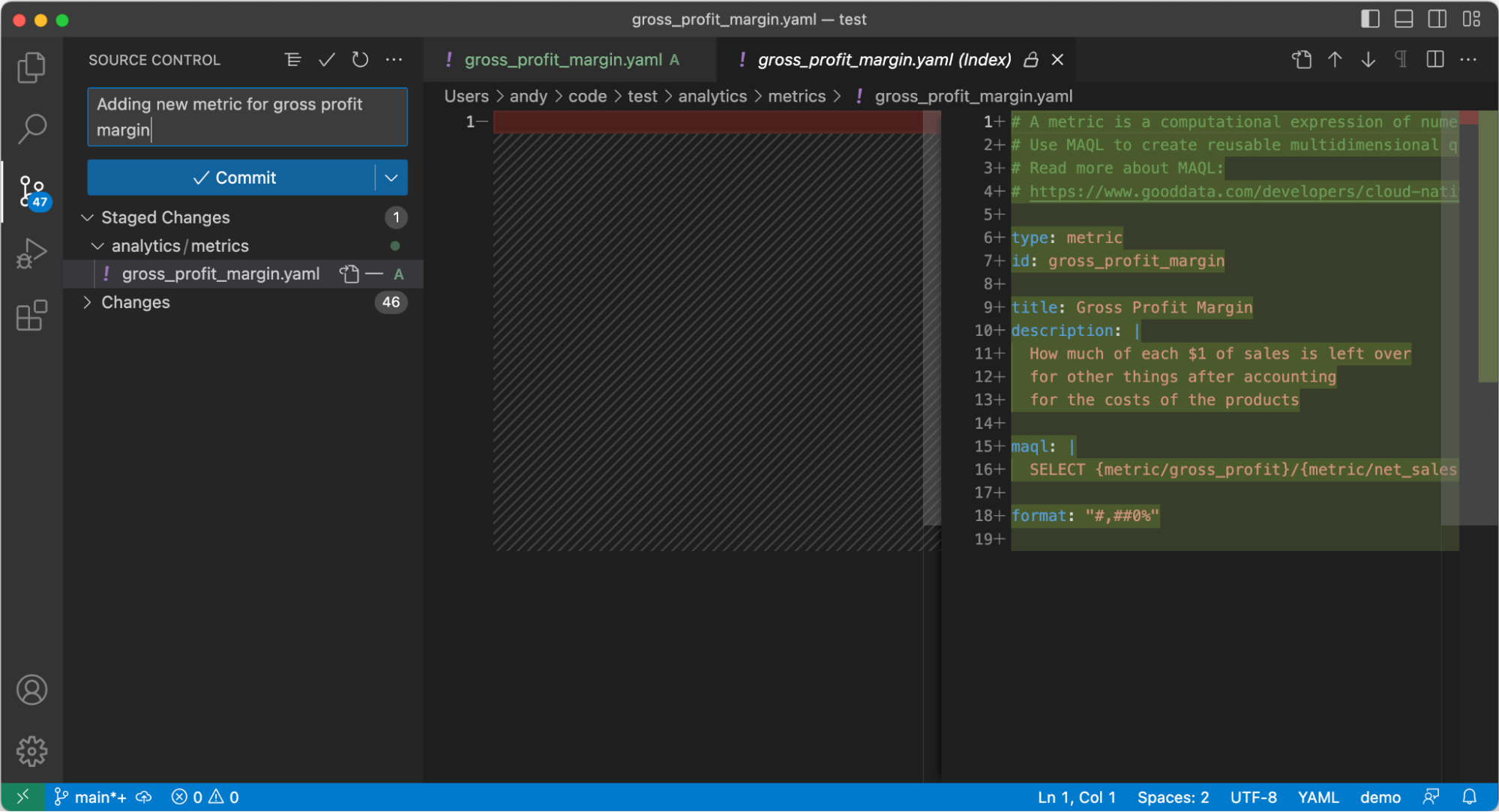

VS Code extension metric preview All good? Great! Now you can commit your changes to a separate Git branch and push it to the repository. Don’t worry, VS Code has an excellent user interface for Git-related tasks. No need to learn yet another CLI tool.

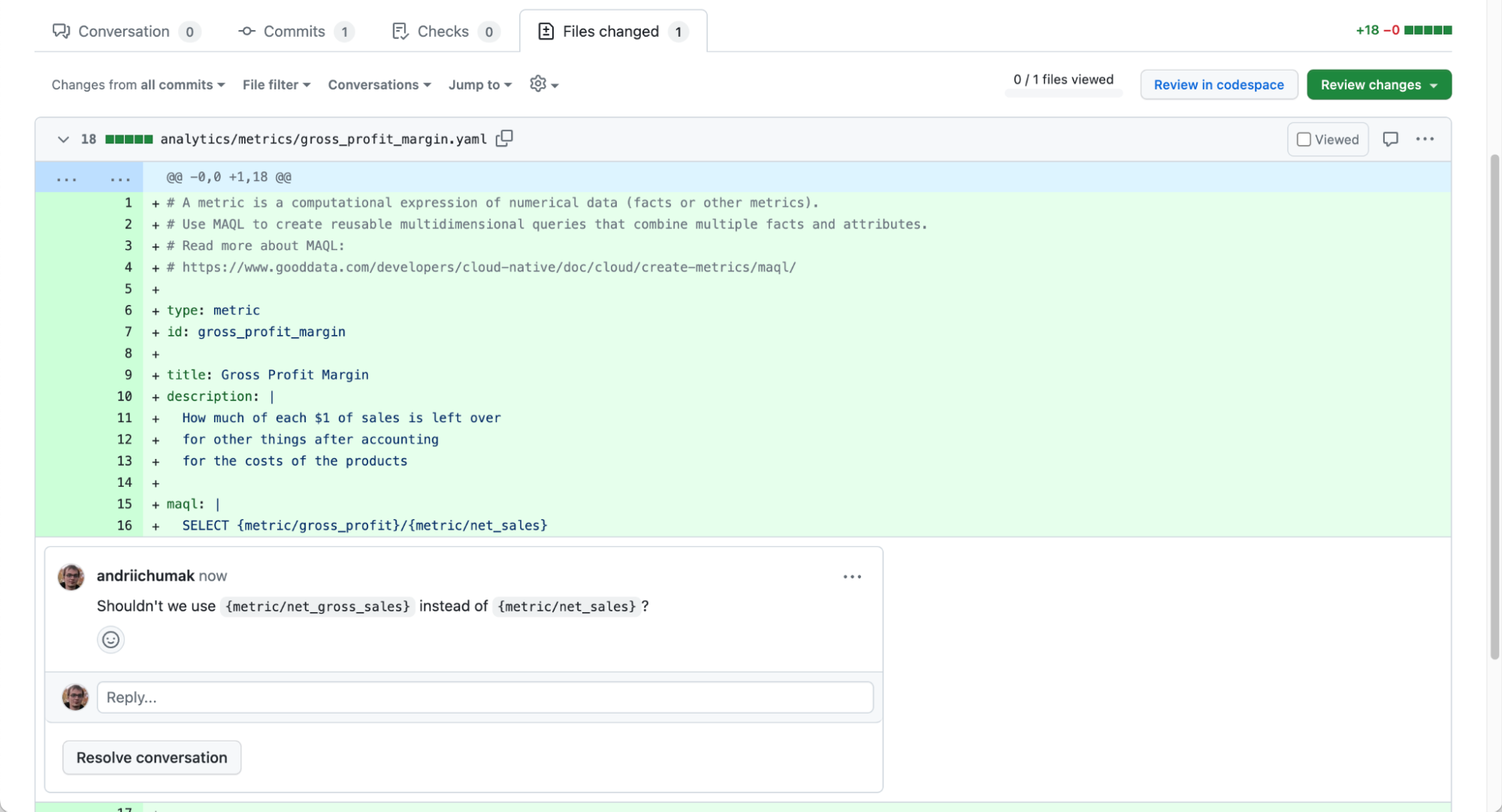

VS Code Git user interface On GitHub (or GitLab, I won’t judge), you create a Pull Request and assign one of your colleagues to do a Code Review. Your colleague will get notified and will see exactly which files you would like to change and how. The Pull Request is a great place to discuss alternative solutions, share knowledge and identify potential issues early on. Also, to argue whether tabs or spaces should be used in code for indentation (luckily, YAML only supports spaces — you’re welcome).

Code Review at GitHub Once everyone is happy with the new code, the Pull Request is completed and your changes get merged into the main branch.

You can have CI/CD pipelines set up that would test the solution automatically, or deploy it to your staging server for manual testing and final approval.

GoodData for VS Code

GoodData for VS Code is a set of tools that we created to make your life easier when building Analytics as Code.

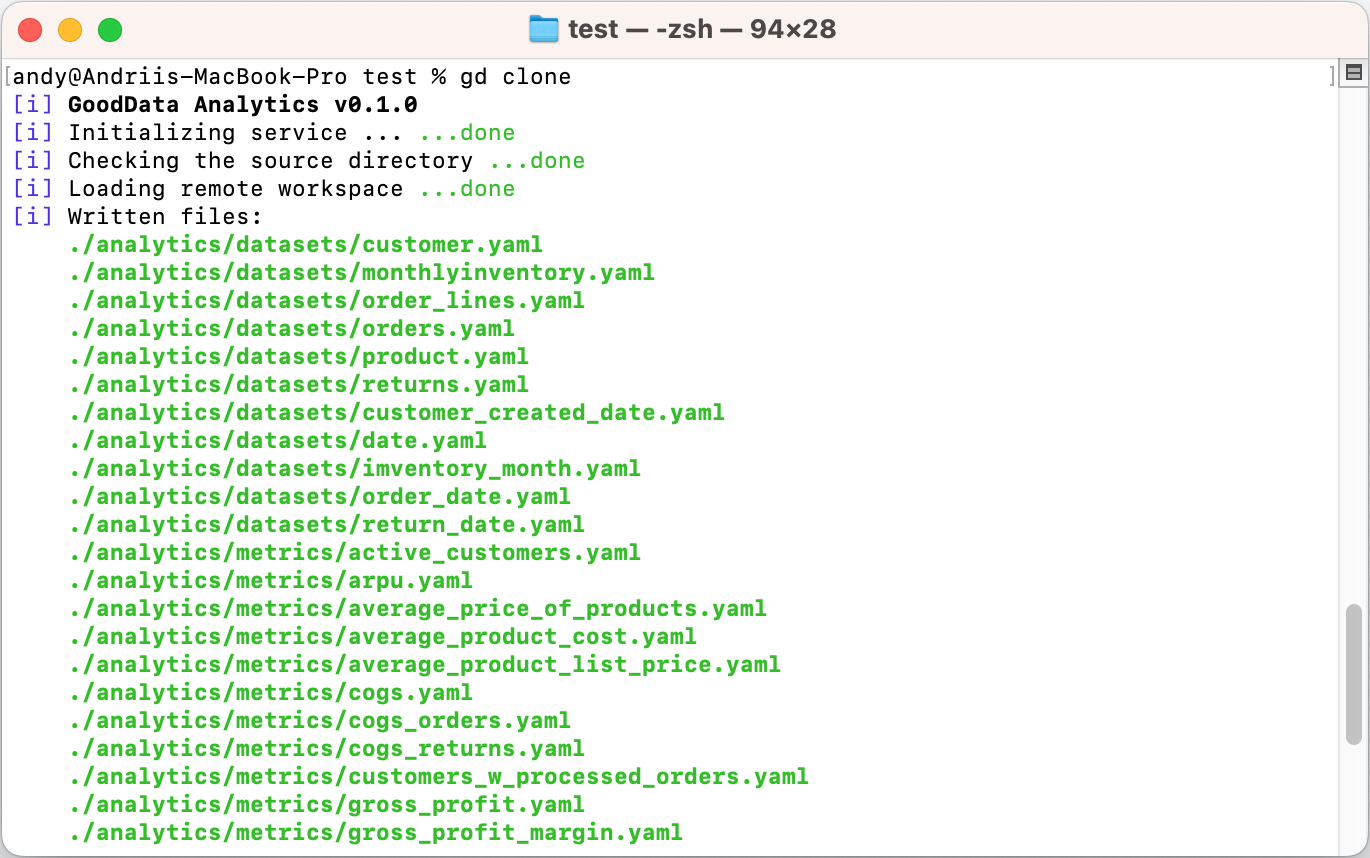

The first tool is a command line application that can help you create a new project, import existing analytics workspace from the GoodData server, validate the project, and deploy your changes back to the GoodData server. It’s meant to be used by you as an analytics author, as well as in automated scripts to be executed when deploying the project to production.

The second tool is a Visual Studio Code extension. VS Code is an open-source code editor that was designed to make coding easier and more efficient. Our extension adds support for GoodData-specific code:

- Syntax highlights for the GoodData YAML files.

- Real-time validation of your project.

- Auto-complete as you type.

- Datasets and metrics preview right in the code editor.

Conclusion

Would you use the “as Code” approach for your next analytics solution? Let us know on our community Slack channel.

Want to try GoodData for VS Code yourself? Here is a good starting point. To use it, you’ll need a GoodData account. The best way to obtain it is to register for a free trial.

Why not try our 30-day free trial?

Fully managed, API-first analytics platform. Get instant access — no installation or credit card required.

Get started