Python SDK for Composable and Reusable Analytics

4 min read | Published

Python is one of today’s most popular programming languages, largely due to its simplicity and versatility. According to UC Berkeley Extension, it was the second-most in-demand programming language of 2021, and many companies that work with back-end development, app development, and data tend to use Python as their language of choice.

Another reason for its popularity among developers is the number of modules and frameworks provided by the Python community. It is worth mentioning that many of these modules and frameworks are open-source, thus improving their quality, security, and transparency.

Below, we'll examine a set of Python modules from GoodData.

What Is GoodData's Python SDK?

GoodData's Python SDK is a set of Python modules for interaction with GoodData Cloud analytics platform. These modules are everything you would expect — easy to use and open-source. Combined with other Python modules, the SDK creates a solid foundation for data analysis, data science, and data visualization.

GoodData's Python SDK contains these modules:

gooddata_sdk

- the main entry point for communication with GoodData Cloud

gooddata_fdw

- SQL gateway to GoodData, which uses the technology of Postgres Foreign Data Wrapper

gooddata_pandas

- allows working with data using Pandas data frames

Experience GoodData in Action

Discover how our platform brings data, analytics, and AI together — through interactive product walkthroughs.

Explore product toursHow Powerful Is GoodData's Python SDK?

GoodData’s Python SDK is an excellent interface for controlling GoodData Cloud — and things really start to get exciting when you combine multiple modules. So, let's take a look at a few use cases.

Analytics As Code

In the instance where you are building a data pipeline using Python, you can seamlessly extend your pipeline code with the automated generation of the analytics layer. This analytics layer not only can boost your effectiveness with consistency and reusability, but it also can provide managed data access across multiple departments, insights, and dashboards.

You can control your analytics layer with the gooddata_sdk module. Afterward, you can access data with the gooddata_fdw and gooddata_pandas modules, or you can handle the entire analytics layer using GoodData Cloud's UI.

Consuming Analytics via Pandas

When someone wants to carry out data analysis and data science using Python, they will come across well-known Python modules such as Pandas, PySpark, Matplotlib, NumPy, SciPy, scikit-learn, PyTorch, TensorFlow, and more. And as Python programmers working with data, we usually come across data structures such as data frames, arrays, tensors, etc. These data structures are generally easy to convert, creating an excellent ecosystem for working with data.

In this use case, we would like to highlight the gooddata_pandas module, which allows users to access their data using the Pandas data frame. If you have worked with data frames previously, you know that filtering, aggregation, and selection are all crucial aspects.

Say, for example, you have a database with multiple tables, and your goal is to get a data frame consisting of columns from various joined tables. With your data connected to GoodData Cloud, gooddata_pandas makes this task much more manageable. You can reuse your metrics from GoodData Cloud, use them to access your data, and directly get your desired data frame. This approach makes working with data more efficient and enables you to reuse metrics.

def good_pandas():

gp = GoodPandas(host=HOST, token=TOKEN)

frames = gp.data_frames(WORKSPACE_ID)

df = frames.not_indexed(columns=dict(

campaign_name='label/campaigns.campaign_name',

price_sum='fact/order_lines.price',

revenue='metric/revenue'

))

return dfdef pure_pandas():

engine = create_engine(f'postgresql+psycopg2://{USERNAME}:{PASSWORD}@localhost/demo')

query = 'select * from demo.demo.campaigns c join demo.demo.order_lines ol on ol.campaign_id = c.campaign_id;'

df = pd.read_sql_query(query, con=engine)

grouped_df = df.groupby(["campaign_name"]).sum()

price_sum = grouped_df[["price"]]

filtered_df = df.loc[df.order_status == "Delivered"].copy()

filtered_df["order_amount"] = filtered_df["price"] * filtered_df["quantity"]

filtered_df_grouped = filtered_df.groupby(['campaign_name']).sum()

filtered_df_grouped = filtered_df_grouped[["order_amount"]]

wanted_df = price_sum.join(filtered_df_grouped, on='campaign_name', how='left')

wanted_df.reset_index(level=0, inplace=True)

wanted_df = wanted_df.rename(columns={"price": "price_sum", "order_amount": "revenue"})

return wanted_dfBoth functions above get the same data frame. As you can see, the approach using the good_pandas module is more straightforward. It uses metric revenue, which can be defined either using gooddata_sdk or GoodData Cloud, and it's a trivial MAQL query, as you can see below.

SELECT SUM({fact/order_lines.price} * {fact/order_lines.quantity})

WHERE {label/order_lines.order_status} = "Delivered"The metric revenue can be used in retrieving other data frames, and the aggregation is going to adapt, which is terrific. So, as you can see, by using gooddata_pandas, you can dramatically boost the performance and efficiency of your work.

Consuming Analytics Results From Your Application via PostgreSQL

Foreign Data Wrapper is currently deprecated

Suppose you prefer to access your data from other (non-Python) environments. As such, you can expose your data using gooddata_fdw as PostgreSQL. Exposing your data in this way results in multiple options by which to process them afterward. For example, if you prefer to use it for data analysis, data science, and visualization in other programming languages (R, Julia), you can connect to exposed PostgreSQL and work with data in the comfort of your popular programming language. Alternatively, you can access your data from any sort of technology that supports PostgreSQL.

select c.campaigns_campaign_name, c.order_lines_price , c.revenue

from "475076b1fbe64674aebeeb18e26de53f".compute cselect c.campaign_name, a.price, b.revenue

from (select ol.campaign_id, SUM(ol.price) as price

from demo.demo.order_lines ol

group by ol.campaign_id) as a

inner join (select ol.campaign_id, sum(ol.price * ol.quantity) as revenue

from demo.demo.order_lines ol

where ol.order_status = 'Delivered'

group by ol.campaign_id) as b on a.campaign_id = b.campaign_id

inner join demo.demo.campaigns c on c.campaign_id = a.campaign_id

order by c.campaign_name;The figures above are examples of getting data using gooddata_fdw vs. using pure SQL over the database. As you can see, using gooddata_fdw is much more straightforward and does not require any JOINs.

Summary: Headless BI Consumption

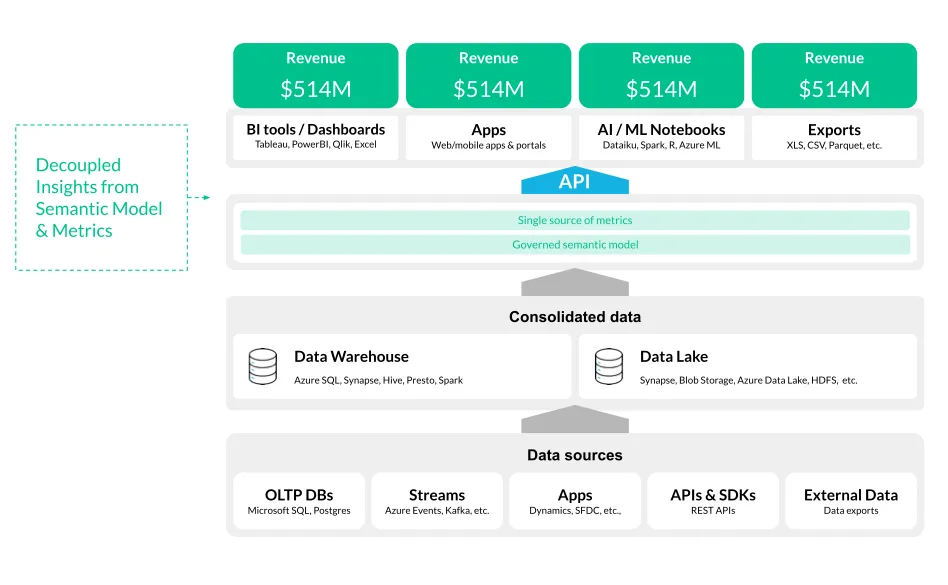

Each of the previous analytics consumption use cases can be summarized as a Python headless BI layer. Headless BI is a concept where the semantic model is treated as a shared service. It's an approach that has multiple benefits, such as establishing a “single source of truth” — in other words, it allows data consumers to work with the same attributes, facts, and metrics, and thus producing consistent outputs. Another benefit is a “don't repeat yourself” principle where complex aggregations, facts, and metrics are defined only once and available to all data consumers.

Headless BI provides consistent results across multiple tools and platforms.

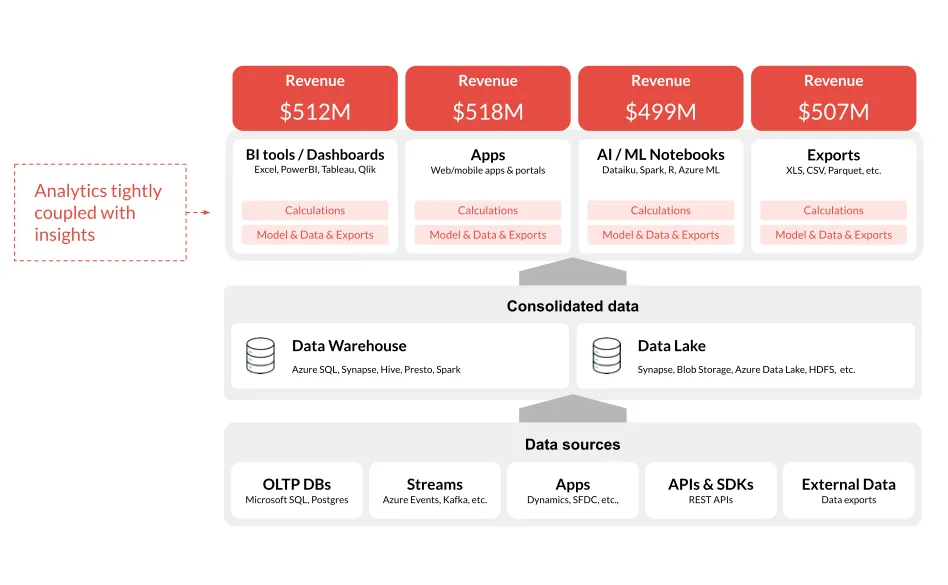

Without the headless BI approach, different analytics tools and platforms yield different outputs.

Hands-On Experience

GoodData's Python SDK allows you to enjoy composable data and reusable analytics in your Python script. It is open-source, meaning that you can easily see what’s happening, and, at the same time, you are welcome to contribute. The aforementioned use cases highlight the power of GoodData's Python SDK, and they’re just a fraction of what you can do.

Do you find these Python modules and specific use cases interesting? Try them out, and feel free to share your experience with GoodData.

Experience GoodData in Action

Discover how our platform brings data, analytics, and AI together — through interactive product walkthroughs.

Explore product tours